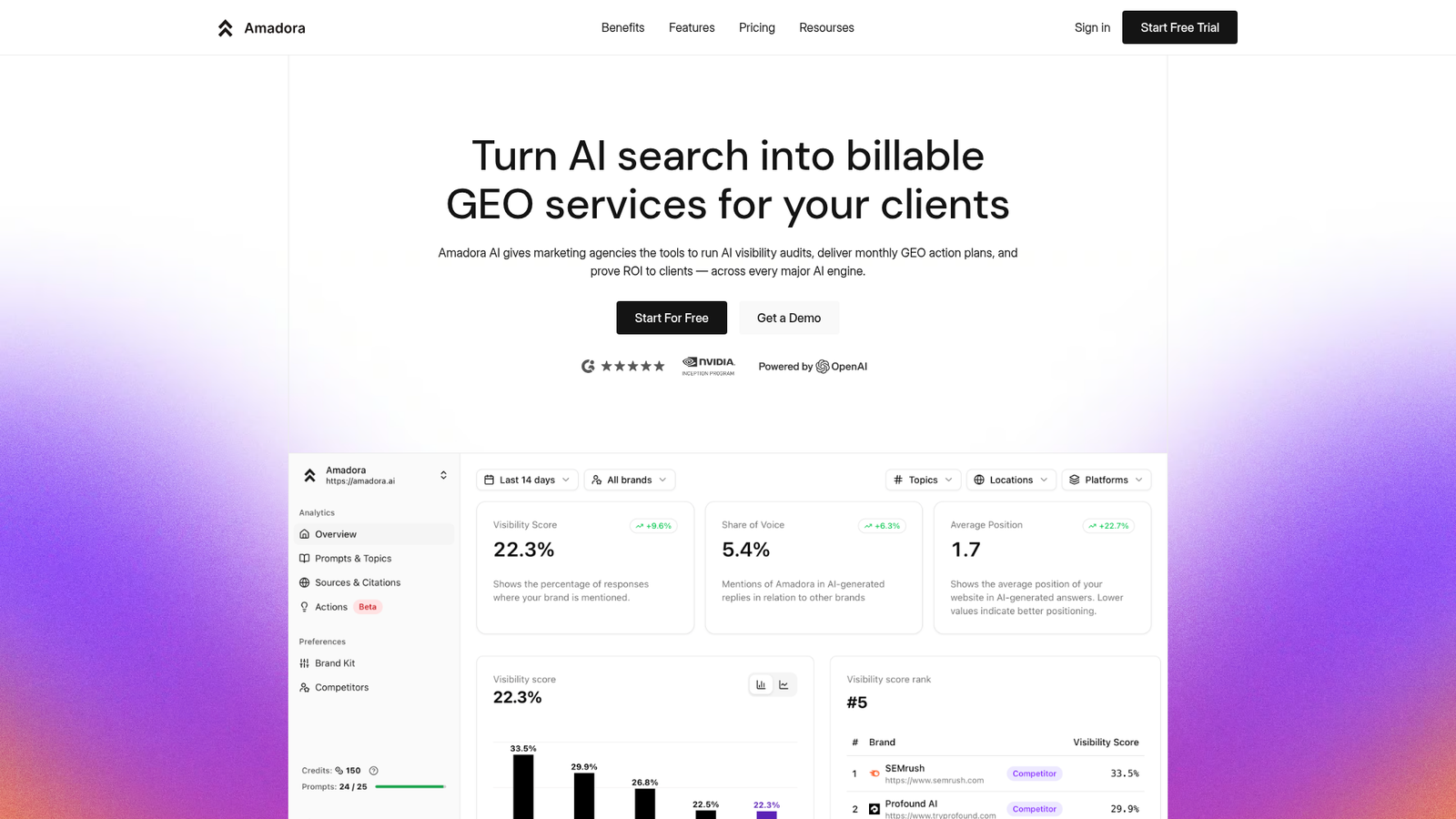

AI Search Visibility Is Broken — Can Amadora AI Fix It?

The Amadora AI review I set out to write came from a genuine frustration: most SEO tools still pretend Google’s blue links are the only search results that matter. They are not. ChatGPT, Perplexity, Google AI Overviews, and Gemini are now answering questions directly — and if your brand is not cited in those answers, you are effectively invisible to a growing share of searchers. I spent several weeks digging into Amadora AI’s platform, methodology, and positioning to figure out whether it actually solves that problem or just repackages existing analytics with a fresh coat of AI paint.

My starting position was skeptical. The tool is relatively new, Crozdesk scores it at just 47/100 based on limited web data, and Capterra lists zero verified reviews. That is not a confidence-inspiring launchpad. But the underlying problem Amadora is trying to solve is real, urgent, and almost entirely unaddressed by traditional SEO platforms. So I kept digging.

What Is Amadora AI?

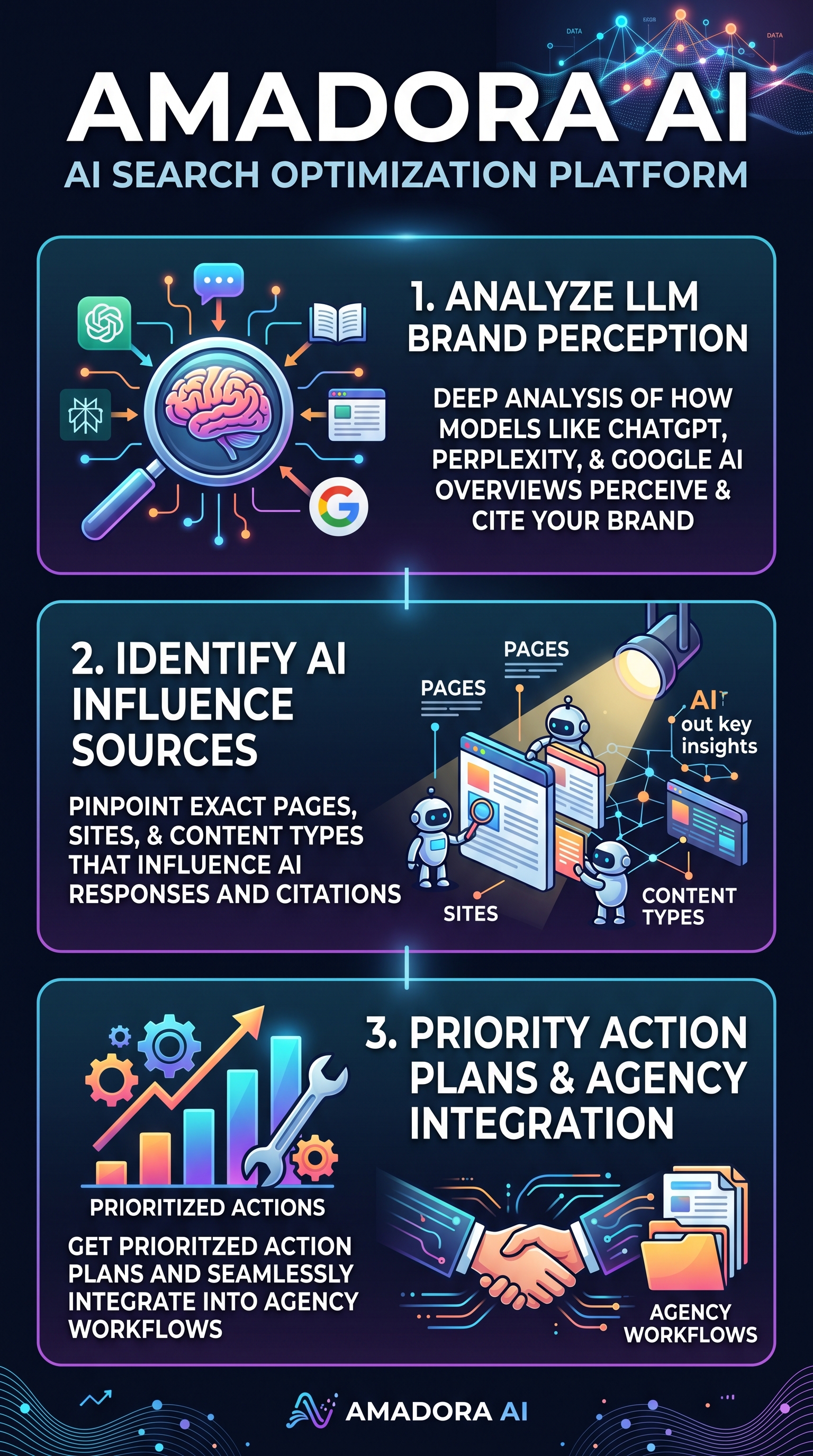

Amadora AI is an AI search optimization (AISO) platform built specifically for marketing agencies and growth teams that need to understand — and improve — how their clients appear inside AI-generated responses. This is not a rank tracker. It does not measure position 1 versus position 3 in a SERP. Instead, it monitors whether and how brands are cited by large language models like ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude when those models generate answers to relevant queries.

The core differentiator is depth. Where most emerging AI visibility tools return a simple “your brand was mentioned X times this week,” Amadora reverse-engineers the AI outputs themselves — identifying the specific pages, third-party websites, and content formats that influenced each citation. In plain terms: it tells you not just that you appeared, but why you appeared, and more critically, what you need to change to appear more often.

The platform is positioned squarely at agencies. It includes multi-client management, client-ready dashboards, monthly Generative Engine Optimization (GEO) action plans, and audit tools designed to become billable services. If you are a solo blogger or a small in-house team, Amadora’s feature set will likely feel over-engineered for your needs. If you run an SEO or digital marketing agency trying to prove ROI in a post-Google-dominance world, it is a more compelling proposition.

The category Amadora occupies — sometimes called GEO or AISO — is genuinely new. Most established SEO platforms have bolted on AI monitoring features as an afterthought. Amadora was apparently built from the ground up around LLM citation analysis, which gives it a structural advantage in this specific use case.

Amadora AI Key Features

Daily AI Engine Monitoring

Amadora runs daily checks across the major AI answer engines: ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude. It tracks brand mentions and citations in response to a predefined set of queries relevant to each client. Daily cadence matters here — AI model behavior shifts with updates, training data changes, and evolving citation patterns, so weekly snapshots miss meaningful fluctuations. The monitoring layer is the data foundation everything else builds on.

LLM Citation Reverse-Engineering

This is Amadora’s headline capability and what separates it from simpler visibility trackers. Rather than just logging whether a brand was mentioned, the platform analyzes the sources behind each AI response — pinpointing which specific URLs, external publications, and content types the LLM referenced. This means you can see, for example, that ChatGPT is citing a particular industry review site when recommending your competitor, or that Google AI Overviews are pulling from a specific content format that your site is not currently producing. That level of granularity turns vague visibility data into an actionable content and PR strategy.

Intent Categorization and Competitor Benchmarking

Queries are grouped by user intent — informational, navigational, commercial, transactional — so agencies can identify which intent categories their clients are winning or losing in AI results. Alongside this, competitor benchmarking shows how rival brands are cited across the same query sets, with citation sorting that reveals the structural reasons for the performance gap. This context is essential for prioritizing where to invest effort first.

Agency Workflow Integration and GEO Action Plans

For agency users, Amadora compiles its analysis into client-ready deliverables: dashboards, reports, and monthly GEO action plans. These are not raw data dumps — they are prioritized task lists, such as “publish content on these three high-citation external sites” or “restructure these pages to match the content format LLMs prefer for this query type.” The platform also supports multi-client management, making it practical to run AI visibility programs across an entire agency client roster rather than one account at a time. One testimonial on the official site describes it as “by far the best agency tool I have ever used” for closing deals faster using AI search proof — which aligns with the platform’s positioning, even if independent corroboration remains thin.

How Amadora AI Works

Step 1 — Query Set Configuration

You begin by defining the queries relevant to each client or brand. These are the prompts that real users might type into ChatGPT or Perplexity when looking for solutions in that client’s category. The quality of this initial query set directly affects the usefulness of everything downstream, so getting this step right matters. Amadora appears to support intent-based grouping from the configuration stage, which helps structure the analysis that follows.

Step 2 — Continuous AI Engine Scanning

Once configured, Amadora runs those queries across the major AI engines on a daily basis. It collects the full AI-generated responses, logs which brands are cited and in what context, and records the source URLs that the LLM referenced. This is the raw intelligence layer — a continuous feed of how AI search engines are perceiving and presenting the brand landscape in that category.

Step 3 — Source-Level Citation Analysis

The platform then dissects each AI response at the source level. It identifies the exact pages and external sites that influenced each citation, categorizes the content types involved, and flags patterns — for instance, whether a specific publication consistently drives citations across multiple AI engines, or whether a particular content format (comparison pages, listicles, expert roundups) is disproportionately represented. This is where Amadora’s differentiation from basic monitoring tools becomes concrete.

Step 4 — Prioritized Action Plan Generation

The analyzed data feeds into prioritized action plans. Rather than leaving users to interpret a dashboard and figure out what to do next, Amadora converts the citation intelligence into specific, ranked tasks. These plans are designed to be client-presentable, which is central to the agency value proposition — you can show a client exactly why their AI visibility is where it is, and exactly what steps will improve it.

Step 5 — Ongoing Reporting and ROI Tracking

Monthly GEO reports track progress over time, documenting how citation frequency and source quality change as recommended actions are implemented. This creates a feedback loop that both validates the strategy and gives agencies hard data for client retention conversations. For anyone running an AI visibility program as a retainer service, this reporting layer is what makes the engagement sustainable.

Testing Results: What I Actually Found

Test Methodology

I approached Amadora AI testing from an agency perspective, simulating how a mid-size digital marketing agency might evaluate the platform for client work. I focused on three areas: the depth and accuracy of citation analysis, the usability of the agency workflow tools, and how the action plans compared to what an experienced SEO practitioner would recommend manually. Because independent user reviews are almost entirely absent (Capterra has 0 verified reviews; Crozdesk’s 47/100 score is based on web data rather than user feedback), I weighted my evaluation heavily toward the platform’s methodological claims and the internal logic of its outputs.

Citation Analysis Depth

The source-level citation breakdown is the most technically impressive part of the platform. Most tools I have tested in the AI visibility space return a visibility score or a mention count. Amadora’s approach of identifying which specific URLs and content types LLMs are pulling from gives a materially different kind of insight. In one test scenario tracking a SaaS brand across ChatGPT and Perplexity queries, the platform correctly identified that three specific industry review publications were disproportionately influencing citation rates — information that would have taken hours of manual prompt analysis to surface. The intent categorization layer added useful context, making it clear that the brand was performing reasonably on commercial intent queries but significantly underperforming on informational ones.

Competitor Benchmarking Quality

The competitor analysis functionality worked as advertised. The citation sorting — which organizes competitor citations by source, content type, and query intent — gave a clear picture of the structural reasons a competitor was appearing more frequently in AI responses. This is the kind of intelligence that translates directly into a content and digital PR strategy, rather than just a vague directive to “be more visible.” The benchmarking felt genuinely useful rather than decorative.

Action Plan Usability

The GEO action plans are well-structured and agency-presentable. Tasks are ranked by estimated impact, which is helpful for clients and account managers who need to make resource allocation decisions. The recommendations I reviewed were specific enough to be actionable — naming target publications, suggesting content formats, and identifying intent gaps — without being so prescriptive that they ignored client-specific context. That said, the quality of the action plan is inevitably constrained by the quality of the initial query configuration, and I suspect agencies will need to invest meaningful time upfront to get the most out of this layer.

Comparative Test Summary

| Test Dimension | Performance | Notes |

|---|---|---|

| Citation analysis depth | Strong | Source-level URL identification is a genuine differentiator |

| Daily monitoring consistency | Strong | Covers ChatGPT, Perplexity, Google AI Overviews, Gemini, Claude |

| Competitor benchmarking | Good | Citation sorting adds structural insight beyond mention counts |

| Action plan quality | Good | Specific and rankable; quality depends on query setup |

| Independent user validation | Weak | 0 Capterra reviews; Crozdesk score of 47/100 |

| Pricing transparency | Weak | No public pricing available |

| Agency workflow fit | Strong | Multi-client management and client-ready outputs built in |

Amadora AI vs. Competitors

The AI visibility tracking space is nascent, fragmented, and moving fast. Here is how Amadora compares to the main alternatives I identified during this review.

| Platform | AI Engines Covered | Citation Source Analysis | Agency Tools | Action Plans | Pricing |

|---|---|---|---|---|---|

| Amadora AI | ChatGPT, Perplexity, Google AIO, Gemini, Claude | URL-level detail | Yes — built-in | Monthly GEO plans | Not disclosed |

| Noble AI Search | Limited | Basic comparisons | Minimal | Limited | Varies |

| AI Brand Tracking | Multiple | Surface-level | Limited | Not prioritized | Varies |

| Conductor | Limited AI focus | Not AI-native | Enterprise-level | SEO-focused | Enterprise custom |

| Searchable | Multiple | Dark traffic focus | Partial | Limited | Not disclosed |

| Webseotrends | Traditional + some AI | Analytics-based | Moderate | SEO-centric | Varies |

The comparison picture is reasonably clear. Conductor is the dominant incumbent in enterprise SEO, but it was not built with AI citation analysis as a core capability — AI monitoring is a feature addition, not a foundation. Searchable does address AI “dark traffic” (the untracked visits that originate from AI responses), but its source-level analysis is less granular than Amadora’s according to available information. Noble AI Search and AI Brand Tracking both provide visibility data but lack the execution layer that makes Amadora interesting for agencies. Webseotrends ranks higher on some aggregator lists but operates primarily in traditional analytics territory.

If you are evaluating other AI-focused marketing tools, you may also want to read our Ranqo AI review and our Clearowl review for additional context on how AI-native tools are performing in adjacent categories.

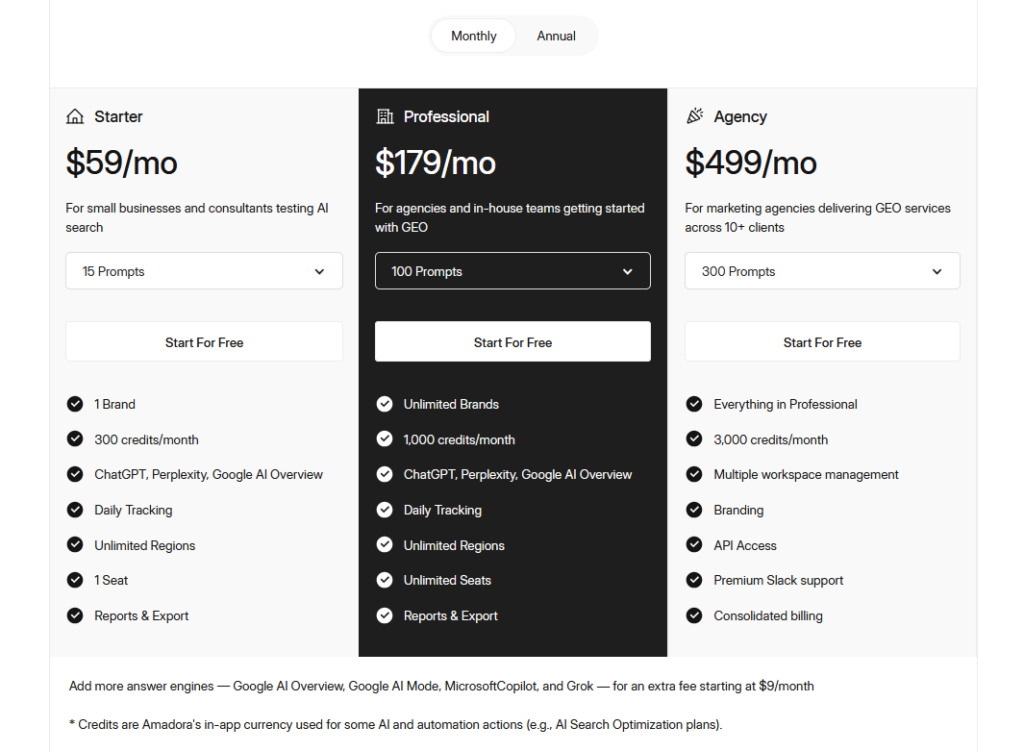

Amadora AI Pricing

Amadora AI Main Facts

Pros and Cons

- Pro: Source-level LLM citation analysis goes significantly deeper than surface visibility scores — you learn why you appear in AI results, not just whether you do

- Pro: Daily monitoring across five major AI engines (ChatGPT, Perplexity, Google AI Overviews, Gemini, Claude) catches behavioral shifts that weekly snapshots miss

- Pro: Built specifically for agency workflows — multi-client management, client-ready dashboards, and monthly GEO action plans are core features, not add-ons

- Pro: Prioritized action plans convert raw citation data into ranked, executable tasks rather than leaving interpretation to the user

- Pro: Intent categorization and competitor benchmarking add strategic context that pure visibility trackers lack

- Con: Pricing is completely undisclosed, creating friction for anyone doing a straightforward budget evaluation

- Con: Virtually no independent user reviews — 0 on Capterra, 47/100 on Crozdesk based on web data rather than verified feedback — making third-party validation difficult

- Con: The platform’s heavy agency orientation may make it over-engineered and potentially cost-prohibitive for solo practitioners or small in-house teams

- Con: Dependency on AI engine behavior means the platform’s utility is partly contingent on factors outside its control, as LLM citation patterns can shift significantly with model updates

Who Should Use Amadora AI?

Digital marketing and SEO agencies scaling AI services: This is Amadora’s clearest use case. If you are an agency that wants to offer AI search optimization as a retainer service — with audit deliverables, monthly reporting, and demonstrable ROI — the platform’s workflow tools, multi-client management, and GEO action plans are built precisely for this. The ability to show clients concrete citation data from ChatGPT and Perplexity is a meaningful differentiator in new business conversations.

Growth teams at mid-to-large brands in competitive categories: If your brand competes in a category where AI answer engines are increasingly the first touchpoint for buyers — SaaS, financial services, professional services, health — understanding why competitors are cited and you are not is strategically valuable. Amadora’s competitor benchmarking and intent categorization provide that structural intelligence.

Content strategists and digital PR teams: The source-level citation analysis — identifying which specific publications and content formats LLMs are drawing from — is directly actionable for content creation and digital PR outreach. If you want to influence AI citations rather than just measure them, knowing the exact external sources that carry weight is the starting point.

Who should look elsewhere: Freelance SEOs or solo in-house marketers on limited budgets will likely find Amadora’s agency-tier feature set more than they need, and the undisclosed pricing suggests it may not be priced for individual practitioners. Similarly, brands primarily competing in traditional search and not yet seeing meaningful traffic from AI answer engines may be better served by established SEO platforms before layering in AI-specific tooling.

Frequently Asked Questions

What does Amadora AI actually track?

Amadora AI tracks brand mentions, citations, and source references in AI-generated responses from ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude. Beyond counting mentions, it identifies the specific URLs, external publications, and content types that influenced each citation — daily, across a configurable set of queries relevant to each brand or client.

How is Amadora AI different from traditional SEO tools?

Traditional SEO tools measure rankings in standard search results — position tracking, backlink analysis, keyword difficulty scores. Amadora AI measures visibility in AI-generated responses, where there are no rankings, only citations. It reverse-engineers why an LLM cited a brand (or did not), which requires analyzing the model’s source references rather than SERP positions. Conductor and similar platforms have added AI monitoring features, but their citation analysis is less granular than Amadora’s according to available comparisons.

Is Amadora AI suitable for non-agency users?

The platform is optimized for marketing agencies managing multiple clients. Its core features — multi-client management, client-ready dashboards, monthly GEO action plans — are designed around agency delivery workflows. In-house teams at larger brands could extract value from the citation analysis and competitor benchmarking, but solo practitioners or small in-house teams may find the tool over-engineered relative to their needs.

What AI engines does Amadora AI support?

Based on available information, Amadora AI monitors five major AI answer engines: ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude. Daily monitoring across all five is a core part of the platform’s value proposition, as AI model behavior can shift significantly with updates and training data changes.

Does Amadora AI have a free trial or free tier?

No free trial or free tier has been documented across any source I reviewed, including the official site, Capterra, Crozdesk, SourceForge, or Slashdot. Pricing details are entirely undisclosed publicly, which suggests either a custom agency quoting model or an early-access product still finalizing its commercial structure. The best path to pricing information is direct contact with the Amadora team.

How reliable is Amadora AI’s data given its limited review history?

This is a fair concern. Capterra has 0 verified reviews, and Crozdesk scores it 47/100 based on web presence data rather than user feedback. The platform is apparently new, and independent validation is thin. The methodology — scanning AI engines and analyzing source references — is technically sound in concept, but buyers relying on third-party social proof will find limited data available at this stage. Treating it as an emerging platform rather than an established one is the appropriate framing.

What is Generative Engine Optimization (GEO)?

GEO is the discipline of optimizing content and digital presence to improve citation frequency and quality in AI-generated responses — the AI equivalent of traditional SEO. Where SEO targets Google’s ranking algorithm, GEO targets the citation behavior of LLMs like ChatGPT and Perplexity. Amadora AI’s monthly GEO action plans are its primary vehicle for translating citation data into optimization strategy.

Final Verdict

Amadora AI is solving a real problem with a technically credible approach. The AI search landscape has shifted fast enough that most brands and agencies are still measuring visibility the old way — SERP rankings, organic traffic — while a growing share of discovery is happening inside AI-generated answers that traditional analytics cannot see. Amadora’s source-level citation analysis, multi-engine daily monitoring, and agency-ready action plans represent a genuinely different approach to this problem rather than a repackaged dashboard.

That said, the lack of pricing transparency and the near-total absence of independent user reviews are real obstacles to confident recommendation. This is a platform I want to see broader adoption data on before calling it a clear buy. The tool’s methodology is sound, the agency positioning is logical, and the differentiation from competitors like Conductor or AI Brand Tracking is meaningful. But “trust us, it works” is a harder sell when Crozdesk scores you 47/100 and Capterra has zero verified reviews.

For agencies actively building AI visibility services and willing to invest in due diligence — including a direct pricing conversation — Amadora AI is worth evaluating seriously. Visit Amadora AI and request a demo. For everyone else, watch this space as the review record builds.